This year, I started The Blind leading the Blind podcast with Kunal Malhotra, a good friend of mine from college. We started it on a whim since we have overlapping interests in reading, writing, gaming, and TV and had already spent a lot of time discussing our perspectives. So far, we’ve recorded and published five episodes, with the latest one being about ‘AI & Writing.’

I am so excited that we covered this topic, because it’s something personal to me. I’ve spent the last few years dedicating all my working hours to writing my book, something which already feels like an uphill battle (and sometimes a ludicrous idea to begin with). On this journey, I like to stay informed about the ways in which this field will change and grow, not only for my own curiosity but also to understand the environment I will enter when my manuscript is pitch ready. As a result, it’s been impossible for me to miss the ever-growing relevance of AI in this field and the writer community’s response to it.

I read a few articles in preparation for this most recent podcast episode, and some of the quotes in those articles resonated with me. One by Mary Rasenberger, the CEO of the Author’s Guild, particularly stood out to me, in which she said that “creators are feeling an existential threat to their profession.” Kunal and I discussed how that feeling, while not unique to the writing industry, is one that is entirely valid considering generative AI models have been trained on copyrighted material.

So, in this episode of Emily’s Desk, I’d like to touch upon some of the topics we discussed, specifically:

- The debate over who owns AI-generated creative work

- How non-AI assisted creative work will potentially change in valuation over time.

- What level of AI-usage is considered ‘acceptable’ within the writing field

- Adapting to an AI-integrated world as a writer

Part 1: Who Owns AI-generated creative work?

Published authors and writers are determined to protect their livelihood by 1) protecting their copyrighted work from being used without their consent or without compensation and 2) ensuring that content-creating companies don’t opt to use AI instead of employing them.

At present there are several ongoing lawsuits in which plaintiffs in creative fields have sued AI companies over the unauthorized of their copyrighted work to train AI models. And given the traction many of these suits have gained in the court, there is clear legal standing for these suits. But these lawsuits are about how AI models are trained. From a philosophical stance, the question of who owns AI-created work isn’t so easy to answer.

The traditional idea of ownership in the creative field is typically based on intentionality, creativity, and originality – something that is inherently human. But AI isn’t conscious (despite passing the Turing test, as Kunal pointed out on our podcast). It seems more acceptable to view AI as a tool rather than a sentient being. So, the next natural question would be “which human involved in the making of the AI-created thing is the owner?” Is it the programmer, the user, or the owner of the company? Perhaps ownership should be split, but between whom?

At present, there is no clear consensus on the answer to this question, likely because the issue over creator attribution and consent is still unresolved. The clear financial motives behind this overreach by AI companies make both this issue and the hope for quick resolution unsettlingly opaque, and most likely financial motives will end up determining the answer to the question about ownership – at least legally. On the podcast, we discussed the political issues we currently face with lawmakers who are frustratingly unfamiliar with the pace and scope of the digital world that we live in, and until we have legislation that explicitly protects creators, the only legal recourse creators have is to base arguments on laws that haven’t been updated in decades.

Part 2: Is AI-assisted work of lesser value?

Our conversation raised another interesting idea – will non-AI-assisted creative work appreciate over time? I would say yes.

Kunal mentioned that the creators of Top Gun: Maverick weren’t transparent about the extent of their CGI usage. He argued that people don’t generally care about extensive CGI use if producers are transparent about it and the quality is good. I agree, but why did they lie in the first place? I believe that motivation comes from the recognition that people value human-made work more in the creative field.

There are countless other examples of how technology has evolved through time to replace human labor in the creative field – clothes making, photography, bread-making machines. And there’s also a reason why ‘handmade’ label exists as a selling point. Likely, as AI becomes more seamlessly integrated into creative fields, its absence in the product-making process will also become a selling point.

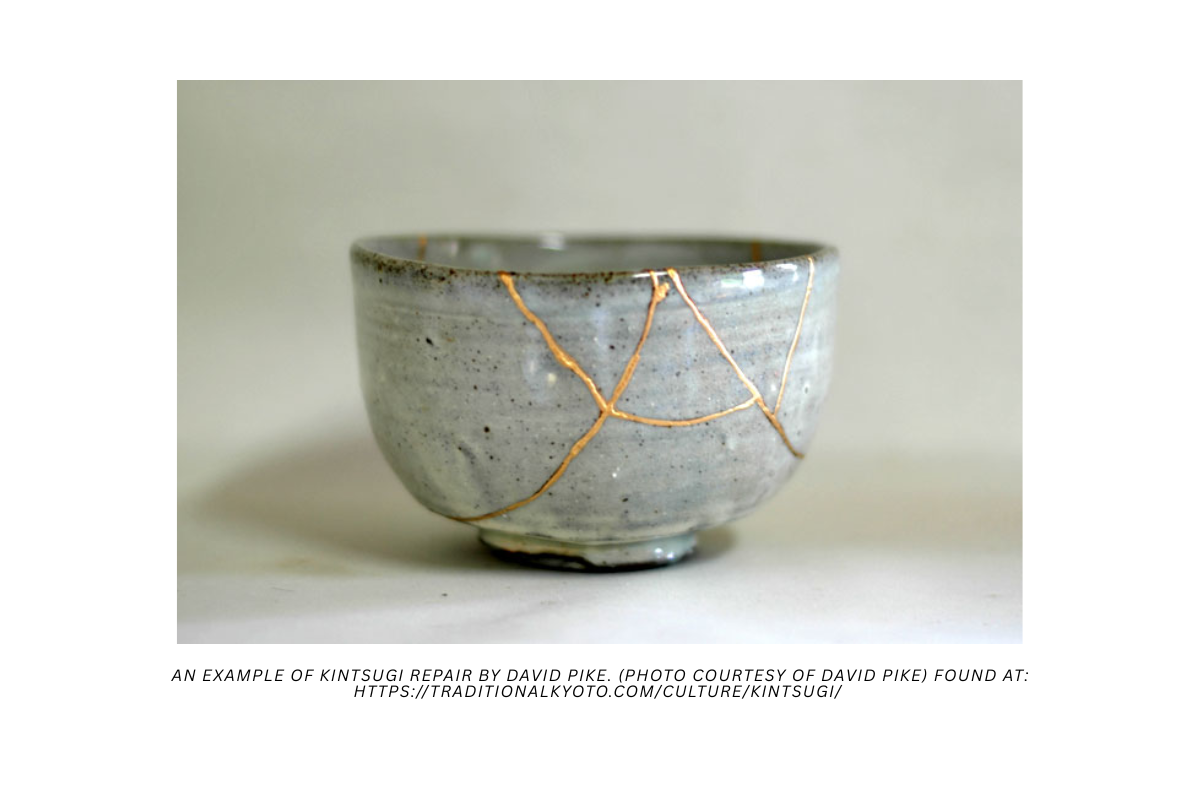

In a world in which AI creates products with the mechanical diligence of a computer, it’s easy to imagine human mistakes as endearing. I think of the Japanese practice of Kintsugi (the practice of repairing broken pottery with a lacquer that ends up highlighting the cracks with a metallic finish – see image below).

There’s also the matter of scarcity. As more creators begin using AI, non-AI-facilitated work will become rarer, and thus more desirable.

And finally, I must consider the nostalgia factor. There’s a reason people still collect vinyl records or why you can still purchase a polaroid camera or a typewriter or a handheld radio. These are pieces of the past that carry not only a profound significance because of their association with a romantic view of the past but also sometimes because that romantic view can become an entire aesthetic in today’s social climate. My views on the supercharged performative nature of our (online) identities is a topic for another time.

Part 3: What level of AI-usage is acceptable in writing?

Having considered all the above, I then ask myself if there will ever be an acceptable usage of AI in the creative space. I’ll focus on writing specifically, to narrow the scope.

Opinions seem to be varied, though notably leaning in favor of “no.” On the one hand, outside of the creative writing space, there seems to be less pushback. For example, in the field of journalism, Kunal and I discussed the reality in which reporters would be able to focus more of their time on investigation and editing and less on writing their actual reports. From our limited perspective, we saw this as a net positive, given that reporters would then be able to make more progress in the part of their job that collects data. But, of course, there is more to the writing industry than reporting.

I think there could be an acceptable level of usage of it within the non-fiction space. For example, if a writer is organizing their notes and research on a historical figure, using AI, I don’t see an issue with that. Again, as we mentioned during our episode, search engines technically qualify as AI, and I’d warrant that most of us has used Google search at some point or another to write a research paper for school. So, when used as a tool to handle data and information, I don’t see a large issue with using AI.

The creative writing space is a bit trickier to think about. While it seems that most people agree that AI doing the actual writing is a no-no, opinions seem split on using AI as a writing partner. Some people see the practice as a useful one. Others think it’s cheating. Here, I see a call back to what I previously mentioned about a writer’s authentic voice. Is a paper still ‘authentic’ if it contains words that were suggested by an AI? Is the story authentic if the AI came up with the idea that dramatically changes a writer’s plot? If you say “no,” why is this any different than when a writer leverages their writer group to improve their work? Is it not the same because the AI isn’t a human being? Unfortunately, I don’t see a lot of discourse about this aspect of the debate, because the focus seems to be on current lack of transparency and rejecting AI entirely – both of which stem from fear.

The lack of transparency stems from AI user’s fear of being labeled as fraudulent if they were honest (ironic, I know), and the rejection of AI entirely stems from fear of being replaced. Both directly relate to the concern over AI replacing ‘authenticity,’ something that I don’t think will ever go away. Thus, the only tangible takeaway I see from this discussion is the fact that transparency in the face of the unstoppable force that is AI is necessary. AI is here, and it’s here to stay. The best we can hope for is a world in which writers who choose to use it are honest about their creative process.

Part 4: How can writers adapt to an AI-integrated world?

Just like we’re not going back to horse and buggy, we’re not going back to a world without AI. So, where do we go from here? My plan going forward is informed not only by the little bit of research I did for this most recent episode, but also by the knowledge that tech companies (the owners of these massive AI tools) have a bad track record of transparency. For me, that means understanding that anything I post on their platforms is at risk of being stolen. This is one of the major reasons a lot of creatives in the visual space have moved away from posting their content on IG and onto other platforms, such as BlueSky, Glass, or Substack to name a few. Likely I see these alternative platforms gaining popularity as time passes.

This also means that whenever I get to the point where I am ready to share my manuscript, I will need to have a conversation about what protections a publishing house offers to the writers that they work with. I can copyright my work on my own (and technically I already automatically own my work upon creation) but once my work is out there in the world, who will stop bad actors from trying to use it without my knowledge or consent. On this point, I simply need to be aware and careful.

And finally, I also need to keep an open mind. Though I still find it unpalatable for AI to do the hard work of writing for a user, I understand that in ten or twenty years’ time, my perspective might no longer be as widely accepted. While I don’t think I will ever change my mind about the importance of teaching students how to craft a logically sound argument, a persuasive essay or the like, I can see the methodology of teaching those skills changing over time. Already I see how things have changed in the classroom from when I was in school; students don’t lug textbooks back and forth because their syllabi material is all digital. Increasingly, assignments are completed, submitted, and graded online. At present there is a private school called ‘Alpha School’ which touts a two-hour learning model which requires students to “complete core subjects in just 2 hours daily.” I am interested to see how these kinds of advances will eventually manifest in the creative space, and in the meantime, I will continue to work as I’ve always done – with an eagerness to improve my craft and constant vigilance.

I hope you enjoyed today’s episode and would love to hear your perspective on this topic! Is AI the grave threat that some writers believe it to be? Do you have a unique take on the ownership debate? Where and when do you think using AI is acceptable in the writing field?

Let me know what you think in the comments below, and until next time…

Get writing!

Emily